How to Extract the Intercept from a Linear Regression Model in R (Example)

In this R article you’ll learn how to return the intercept of a linear regression model.

Table of contents:

So without further ado, let’s dive into it.

Creation of Example Data

We use the following data as basement for this R tutorial:

set.seed(894357) # Drawing some random data x1 <- rnorm(200) x2 <- rnorm(200) - 0.3 * x1 x3 <- rnorm(200) + 0.4 * x1 - 0.2 * x2 x4 <- rnorm(200) + 0.3 * x1 - 0.2 * x3 x5 <- rnorm(200) - 0.03 * x2 + 0.4 * x3 y <- rnorm(200) + 0.1 * x1 - 0.25 * x2 + 0.15 * x3 - 0.4 * x4 - 0.25 * x5 data <- data.frame(y, x1, x2, x3, x4, x5) head(data) # Returning first lines of data # y x1 x2 x3 x4 x5 # 1 1.1684410 -1.58353017 -1.2234898 -0.3166072 1.5705093 -0.84385144 # 2 -0.1800286 0.09742054 0.7965851 1.5848084 0.2988516 1.89817234 # 3 1.8233851 1.27806258 0.5094414 1.6230221 -0.4993945 -1.75827901 # 4 0.8660731 -0.79919138 0.3025732 -0.4434784 -0.9492395 0.01970439 # 5 3.6639976 -0.77383199 -1.1410142 0.1921179 -1.4590195 -1.64504845 # 6 -0.8836137 0.48293479 0.2443208 1.5685126 -0.2437507 -0.43371700

As you can see based on the previous output of the RStudio console, our example data contains six columns, whereby the variable y is the target variable and the remaining variables are the predictor variables.

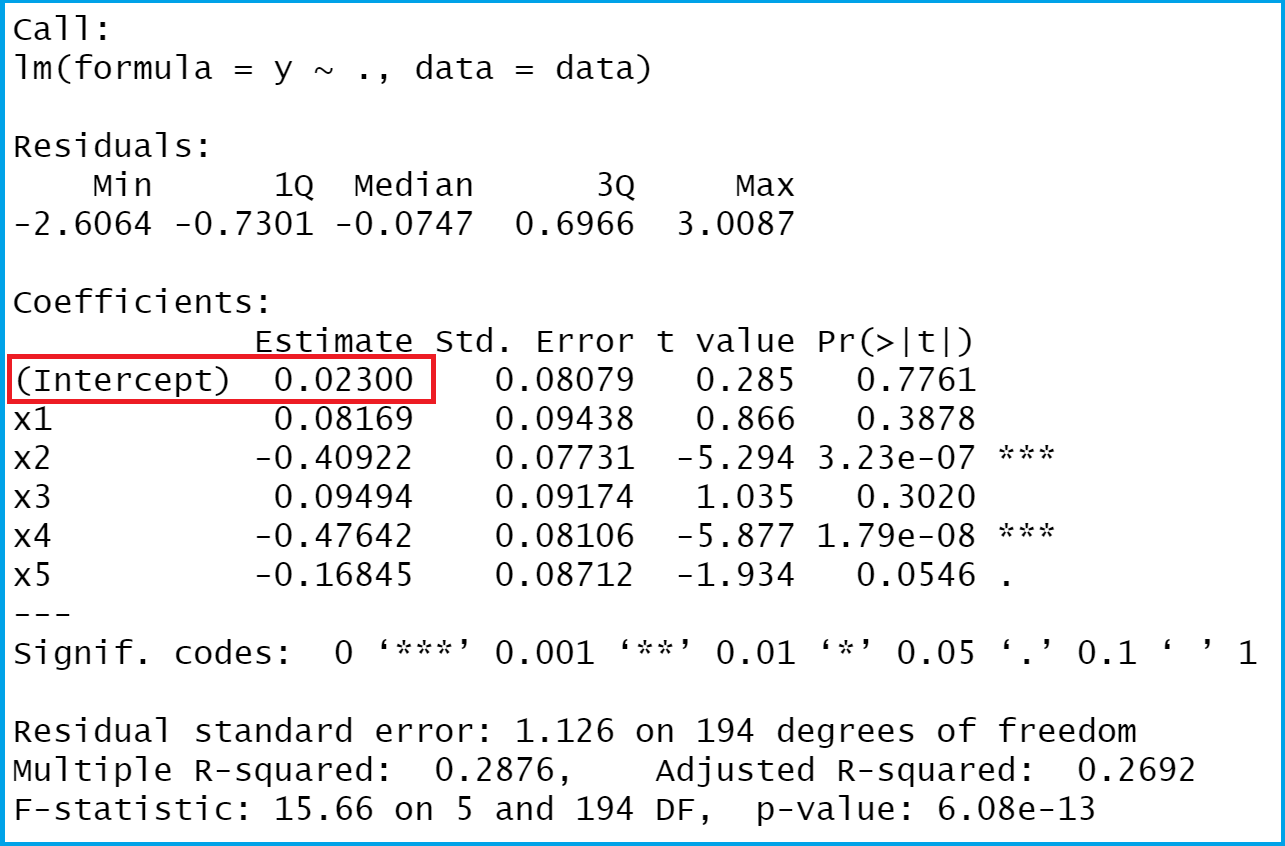

Let’s estimate our regression model using the lm and summary functions in R:

mod_summary <- summary(lm(y ~ ., data)) # Executing linear model mod_summary # Return linear regression summary

Figure 1 shows the value we want to extract from our linear regression model: The intercept.

Example: Extracting Intercept from Linear Regression Model

The following R programming syntax shows how to identify the intercept of a linear regression analysis:

mod_summary$coefficients[1, 1] # Pull out intercept # 0.0230042

Our intercept is 0.0230042.

Video, Further Resources & Summary

I have recently released a video on my YouTube channel, which illustrates the R code of this article. You can find the video below:

The YouTube video will be added soon.

In addition to the video, you might want to read the other articles of this website. I have released numerous posts about regression models already.

- Specify Reference Factor Level in Linear Regression

- Add Regression Line to ggplot2 Plot in R

- Extract Regression Coefficients of Linear Model

- R Programming Examples

Summary: This post showed how to extract the intercept of a regression model in the R programming language. In case you have any further questions, don’t hesitate to let me know in the comments.